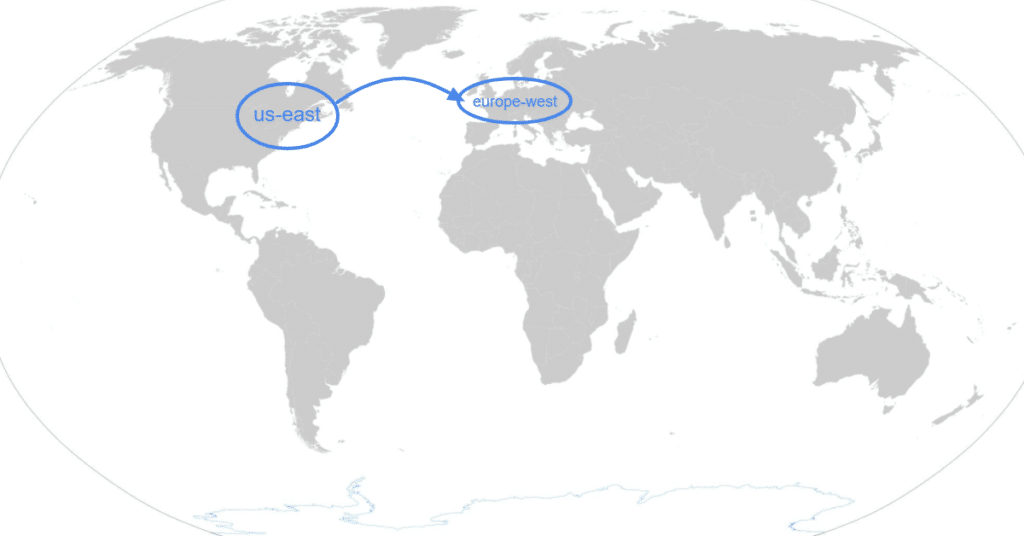

We initiated a Google Cloud Big Query for one of our clients under an organisation and, for internal reasons, chose a region in the US. The client created a new organisation and of course he needs to have his data in the European Union (GDPR, etc.).

Unfortunately, it is not possible to easily change the region of a Big Query dataset nor export and import the whole dataset. Google help mentions that you can contact support to assist you with a transfer, but I was skeptical about this possibility, and we decided to do a transfer manually.

There is no feed for data extracts and loads (I recommend that you verify this information as you work your way through this article).

Step-by-step guide

1. Connect GA4 to new Big Query project

In GA4, switch to your new Big Query with the updated region. In 24 hours, a new Big Query dataset with an initial table will be created. It will be easier to perform the next steps if a dataset already exists with a prepared schema.

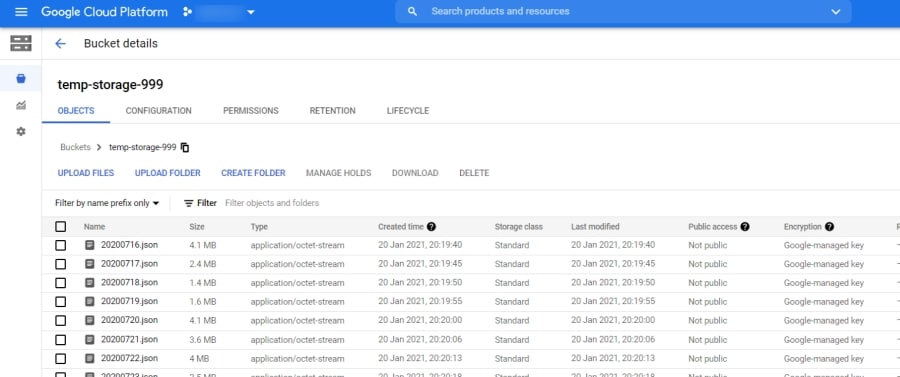

2. Create a bucket storage file

If you create a bucket storage file inside the same project, there is no need to update credentials— you will have access by default.

3. Extract all data to the bucket storage

You can extract tables one by one in your user interface. However, if you have 180 tables as we did, this will be an extremely slow process. There is one positive: tables have standard names YYYY-MM-DD. It is possible to prepare everything in Excel, e.g., via the CONCATENATE function, and afterwards to copy all 180 rows into the Google Cloud shell.

Firstly you need to choose a project in which you are going to work:

gcloud config set project project-name

Afterwards, you can copy all commands in bulk:

bq extract --destination_format NEWLINE_DELIMITED_JSON 'analytics_xxx.events_20200716' gs://temp-storage-999/20200716.json

bq extract --destination_format NEWLINE_DELIMITED_JSON 'analytics_xxx.events_20200717' gs://temp-storage-999/20200717.json

3. Import tables into the new Big Query account

It is necessary that you have sufficient credential levels to access both the new and old projects.

Afterwards you can copy all of the load commands to the Google Cloud shell:

bq load --autodetect --source_format=NEWLINE_DELIMITED_JSON 'analytics_xxx.events_20200716' gs://temp-storage-999/20200716.json

bq load --autodetect --source_format=NEWLINE_DELIMITED_JSON 'analytics_xxx.events_20200717' gs://temp-storage-999/20200717.json

bq load --autodetect --source_format=NEWLINE_DELIMITED_JSON4. Shut down the old project

And that’s it—the data has been transferred to the EU region.